Writing an Image Processing Filter

In this post we’re going to cover the basics of how to write an image processing filter using glsl. We’ll be producing a variant of an existing filter. Here’s a look at our final project:

We’ll be using the GPUImage framework in order to jump in to writing glsl shaders and avoid all the boilerplate misery that OpenGL requires. You can get the sample project here.

GPUImage Framework is Awesome!

I’ve been on this quest now for several months to learn OpenGL. As I stated in my previous post, I got really interested in image and video processing because of my work with Core Image for Ray Wenderlich’s book (iOS 5 by Tutorials). As I was thinking about how to use the GPU to do real time video processing, Brad Larson came along and released his GPUImage framework on Github.

The advent of this framework was like something out of The Secret. GPUImage was exactly what I wanted, but was not quite skilled enough to create myself. I felt as though I had summoned it from the great universe. (I’ve been working on summoning a million dollars, but so far, no dice!)

GPUImage is an open source framework aimed at the same task that Core Image is for. The advantages of GPUImage over Core Image:

- GPUImage is faster than Core Image

- GPUImage allows you to write your own filters (Core Image allows this on OS X, but not on iOS yet.)

- GPUImage uses GLSL instead of a proprietary language

- GPUImage is open source

The only drawback is that GPUImage doesn’t yet include all the filters that Core Image offers. This will only be true for a while longer, however, as GPUImage has new filters added each week. It already offers some really cool filters that Core Image does not (like the epically awesome Kuwahara filter that makes a photo look like an oil painting).

Learning OpenGL from the Ground Up

One thing I’ve realized as I’ve attempted to learn OpenGL is that I don’t learn very well starting at the beginning and working my way up the conceptual ladder. This is the way we’re taught in school most often, but I suspect of the effectiveness of it. You can think of this approach as learning to play the piano by starting with scales and dexterity exercises. It’s boring.

GPUImage is a good way to learn about OpenGL because all the low level, boilerplate code is already written. You can jump right to the fun stuff (writing new filters) and in a short time see the results. Then, if you’re interested, you can start adjusting the lower level stuff as you see the need.

I’ve sat through lectures about how OpenGL is a state machine and how matrix math works and I wasn’t able to pay attention long enough to get to the good stuff (actually building something). I’ll confess, I still don’t have a great grasp of matrix math, but I can understand how a matrix can be used to rotate, scale, and distort a primitive, and all that stuck only when I started playing with it.

There are three resources that I would suggest referring to, or reading, if you get lost at any point here. Jeff LaMarche has a series on OpenGL that’s very well written and approachable. Ray Wenderlich has a number of tutorials on OpenGL ES 2.0 (anything Ray does is very easy to follow along with). Finally, there’s a more technical resource on OpenGL here that has some detail that the previous two do not have. If mine is your first article, I would suggest reading Ray’s stuff first (at a minimum), because you will need some context to understand some of what I refer to here.

In this post I’m going to go putting together a simple app that captures live video, runs it through a filter, and displays it on the screen. Also, I’ll be going over the basics principles needed to write your own GLSL based filter using the polar pixellate filter as an example.

There are lots of other things you can do with the framework including recording video, playing video files and filtering them, and loading and saving still images. The project has good sample code showing you how to do all these things in the examples section. Thanks go to Brad Larson for creating an awesome framework and for providing some great code samples. It’s really easy to break into it.

Basic Concepts of OpenGL ES 2.0

OpenGL is a standard for drawing three dimensional worlds onto a computer screen (two dimensional). In order to accomplish one supplies three dimensional geometry (the model) color, textures, lighting, and other information to the hardware which translates the scene into something represented on screen. OpenGL added the programmable pipeline which replaced certain parts of the process with code that can be modified by the user. This gave rise to many interesting lighting, animation, and other visual effects that were difficult or impossible before. One application of the programmable pipeline is the ability to apply filters to photos or video. These programs are called shaders.

Shaders are small programs written is GLSL (OpenGL Shading Language), which looks very much like c, that are run in parallel for each unit (vertices or fragments) of the data. The first is a vertex shader and here we can alter the standard behavior of the vertices to create different effects. One commonly seen effect is to create waving grass. The shader is passed in vertices (the units that make up polygons) and can be programmed to animate the positions of the vertices according to some desired behavior.

GPUImage Vertex Shaders

Most of the time with image manipulation we are working with two dimensional unit. We won’t be doing very much with the vertex shader portion of the pipeline. The image is displayed on a ‘quad.’ A quad is just a rectangle that is drawn as a surface on to which we project the image. This is necessary in OpenGL, everything exists in three dimensional space. If we want to draw something, we first have to create a surface to draw it on to. OpenGL ES 2.0 can only draw triangles (well, it can also draw points and lines, but we can’t draw rectangles), so a quad is constructed by drawing two triangles.

The standard vertex shader in GPUImage looks like this:

attribute vec4 position;

attribute vec4 inputTextureCoordinate;

varying vec2 textureCoordinate;

void main()

{

gl_Position = position;

textureCoordinate = inputTextureCoordinate.xy;

}

There are three kinds of variables that get passed into a shader: attributes, varyings, and uniforms. Attributes are passed in per vertex. Attributes usually contain information like the vertices of the geometry we’ll draw, the vertices of the texture that we’re mapping to those geometries, color of the vertices, etc.

Varyings are variables that are declared and set in the vertex shader that get passed into the fragment shader. When they exist at the vertex level, there is a value for each vertex. When passed to the fragment shader, there are potentially many fragment values for each vertex value, the varying variable will get interpolated so that each fragment has a different value based on it’s proximity to the relevant vertices.

Uniforms are passed in by us exist at the program level. They are same across all vertices and fragments.

In the GPUImage framework we pass in two quads. The first quad is the geometry or the surface we’ll be creating, the second is the set of coordinates that we’ll use from the texture.

Basically, in OpenGL we are drawing geometric forms in three dimensional space. Then we are mapping portions of a texture (a supplied image) to those geometric forms. I’m not going to explain all the details of texturing because it has been covered very well previously. If you need to know more, I recommend the resources at the beginning of this post.

The vertex shader is usually very simple. We have a rectangular texture and a rectangular geometry, we’re just mapping the texture to the geometry and passing it through to the fragment shader. There are a couple of exceptions to this where we will do some other things in the vertex shader, but in those cases we are just trying to precalculate a few things using the vertex shader for performance reasons. In those cases we are still just passing the image through to the fragment shader to do all the real magic.

Because most of the time we’ll use this vertex shader, we aren’t going to include it in our filter subclass. It’s the default vertex shader for the GPUImageFilter class. I just wanted to show it to you so you know that there is a vertex shader always running, but we don’t need to concern ourselves with it.

You’ll notice that we create an ‘textureCoordinate’ vec2 type. This is passed to our fragment shader. In the fragment shader it will be available to us for every fragment (you can think of a fragment like you do pixels, they don’t always map 1:1 to the physical pixels on our phone’s screen, but they act like pixels). So, we’ll be using that variable value a lot. A vec2 is a pair of points like a CGPoint. We’ll also be dealing with vec4 quite a bit, for example, when we manipulate color (r, g, b, alpha).

The gl_position = position maps our input attribute of the geometry position to the internal variable gl_position. We must write to gl_position in the vertex shader for the geometry to get into the internal pipeline. There is another required internal variable in the fragment shader called gl_FragColor. This variable is what we right to in order to control the color of each fragment.

All of the above is just background really. I could go over how the GPUImage framework sets up the framebuffer objects and how it sets and controls state in OpenGL, but the truth is, you don’t need to worry about it in order to write your own filter. This is what’s great about it.

We’re going to start writing our own filter class. When we’re done we’ll create a sample view controller class to filter the incoming video and filter the result.

Setting up a new GPUImage Project

We’ll need to set up a new project. Go to File->New->Project->Single View Application. Name the project whatever you want. You can tick (or leave ticked) storyboards and ARC. Make the project iphone only.

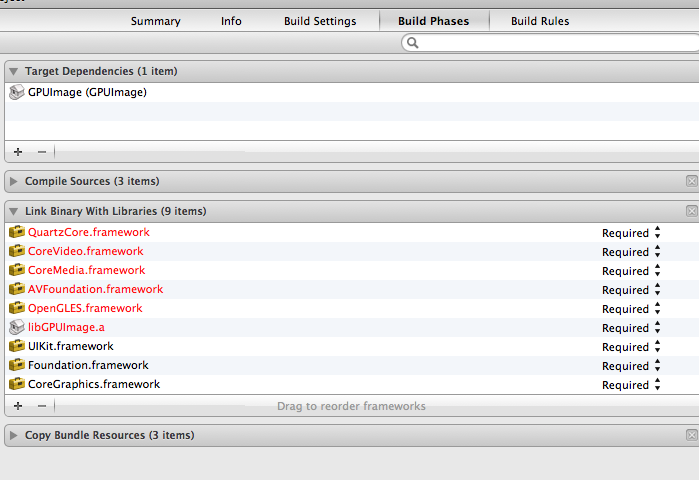

Right click on the frameworks folder and choose Add Files to “whateverprojectname.” Navigate to and choose the GPUImage project. Now we need to add the target as a dependency and add a bunch of frameworks and the GPUImage library to the Link Binaries with Library section. Add the following frameworks so your screen looks about like this:

Finally, in the build settings, we need to add -ObjC to the other linker flags and put the location of the GPUImage/framework folder in the header search paths. You should now be all set up.

Now we’re ready to write our own class. We’re going to re-create the polar pixellate shader and add a twist. We’ll add posterize functionality to it as well. Posterize reduces the number of colors displayed.

Polar Pixellate Posterize

The filter uses polar coordinate system to pixellate an incoming image.

The first thing to do is create a new subclass of GPUImageFilter. Call the subclass GPUImagePolarPixellatePosterizeFilter.

Add the following code to the header file:

#import "GPUImageFilter.h"

@interface GPUImagePolarPixellateFilterPosertize : GPUImageFilter {

GLint centerUniform, pixelSizeUniform;

}

// The center about which to apply the distortion, with a default of (0.5, 0.5)

@property(readwrite, nonatomic) CGPoint center;

// The amount of distortion to apply, from (-2.0, -2.0) to (2.0, 2.0), with a default of (0.05, 0.05)

@property(readwrite, nonatomic) CGSize pixelSize;

@end

We’re going to pass two uniform variables into this filter. The center variable is the point from which the pixellate polar calculation originates. You’ll see that the default is 0.5, 0.5. This is the center of the screen. The coordinate system within OpenGL ranges from 0.0, 0.0 to 1.0, 1.0 with the origin in the bottom left hand corner. The pixellate value controls how much (how big) the pixel cones are. Because we’re using a polar coordinate system, the x value is the radius (distance from ‘center’) and the other is an angle in radians.

Let’s take a step back . . . in order to set up a new filter, we need a couple of things. By subclassing the GPUImageFilter class we’ll get all the apparatus that sets up the OpenGL framebuffers and state. But, we’ll need to actually write the shader itself, and create the pointers for any uniforms that we want to include. We’ll also create class variable to store the values that we pass into the uniforms. We need both the GLint that’s a pointer to the uniform and an instance variable to contain the data for that uniform (this is for convenience). We could set the uniforms by hand, but why would we do that?

Our First Shader

On to the first part of the implementation file. Add the following code before the @implementation line:

NSString *const kGPUImagePolarPixellatePosterizeFragmentShaderString = SHADER_STRING

(

varying highp vec2 textureCoordinate;

uniform highp vec2 center;

uniform highp vec2 pixelSize;

uniform sampler2D inputImageTexture;

void main()

{

highp vec2 normCoord = 2.0 * textureCoordinate - 1.0;

highp vec2 normCenter = 2.0 * center - 1.0;

normCoord -= normCenter;

highp float r = length(normCoord); // to polar coords

highp float phi = atan(normCoord.y, normCoord.x); // to polar coords

r = r - mod(r, pixelSize.x) + 0.03;

phi = phi - mod(phi, pixelSize.y);

normCoord.x = r * cos(phi);

normCoord.y = r * sin(phi);

normCoord += normCenter;

mediump vec2 textureCoordinateToUse = normCoord / 2.0 + 0.5;

mediump vec4 color = texture2D(inputImageTexture, textureCoordinateToUse );

color = color - mod(color, 0.1);

gl_FragColor = color;

}

);

The shader code itself is enclosed in the SHADER_STRING() macro. We assign the shader string to a const NSString object. This const will then be given within the -init call to set up the filter object. There’s a whole lot of compiling and error checking and sending the shader to the GPU that we don’t have to worry about here. If you want to know what all is happening, you can read the Jeff LaMarche post about it here. GPUImage (and Cocos2d 2.0) use the GLProgram class that Jeff Lamarche sets up in that post (both with some modifications).

Let me say a few words about data types and operations in GLSL. The main data types are int, float, vector (vec2, vec3, vec4) and matrix (mat2, mat3, mat4). You can perform matrix and vector operations using simple arithmetic, ie. vec2 + vec2. Also, floats or int combined with complex types will also work (float * vec2 = vec2.x * float, vec2.y * float). A complete list of types can be found here. (about half way down) Finally, you can do things like ‘vec4.xyz’ to get a vec3.

Let’s go through this shader line by line. The first four lines set up the variables. textureCoordinate is the vec2 that is set in the default vertex shader we looked at earlier. The center and pixelSize uniforms are the variables we’ll set up in the class itself. I’ll show you how to set those values in a minute. Finally, we have the inputImageTexture variable that’s type sampler2D. This uniform is set by the superclass, GPUImageFilter, and will be whatever image we supply to the filter class. It could be a still image or a frame from incoming video (either from the device’s camera or a movie file). Sampler2D is a two dimensional texture data type.

You may have noticed that we are using the highp qualifier all the time. We have to tell OpenGL ES 2.0 the precision level of our data types. As you can imagine, the higher the precision, the more accurate the data type is. But, lower precision has the benefit of being faster. We can set it to lowp, mediump, or highp. There’s a post here that talks more about precision and what the actual constraints are here.

A shader always has the main() function. Our normCoord variable is the location on our incoming texture that we want to use as the color for this fragment. We’ll manipulate this value in order to pixellate from a position in a polar coordinate system.

The first thing we’ll do is convert our coordinate system into a polar coordinate system. The textureCoordinate ranges from 0.0, 0.0 to 1.0, 1.0. The center uniform variable has the same range. In order to describe our screen in polar coordinates, we need a -1.0, -1.0 to 1.0, 1.0 coordinate. The first two lines convert into those values. The third line subtracts the center from normCoord. This effective makes all our calculations originate at the center value we have set, instead of the actual center of our coordinate system.

From here we’ll convert from a cartesian to a polar coordinate system. Once we’re in polar coordinate, we’ll run the same calculation we’d run on a regular pixellate filter, divide by the step size (pixelSize) and subtract the remainder. This has the effect of taking the value every step size instead.

Once we’ve done that we convert the value back into a cartesian coordinate system and add our center value back in. Then we return it to the 0.0, 0.0 1.0, 1.0 range we need for the texture lookup. The next line uses this new normCoord in the texture2D() call. This function is a texture lookup function. It takes two parameters, the two dimensional texture object (inputImageTexture in our case) and the coordinate (textureCoordinateToUse).

Finally, we run the mod operation on the color value. We are using a hard coded step value of 0.1. This will reduce the color range for the red, green, blue (and alpha, but alpha is always 1.0 in our case so . . . ) from 256 steps for each component (256 cubed or 16.8 M colors) to 10 step (1,000 colors).

I feel that my explanation of how and why we move between cartesian and polar coordinates is a little weak. For a more detailed description of using the polar coordinate system in glsl, there’s a great post (now available on wayback machine) here (that’s where I got a bunch of this code from).

That’s our fragment shader. It will run very fast. If we were to do the same operation on the CPU it would take much, much longer to accomplish the same goal. In many cases, the GPU can do live video filtering that wouldn’t be possible on the phone’s CPU.

Finishing the rest of the Class

Once we’ve written the shader, the only other pieces we are required to include are the initialization and the setters for the uniform variables. In the initialization we will set the shader string, set the uniform pointers, and set up any default values.

On to the rest of the code. Add the following after the @implementation:

@synthesize center = _center;

@synthesize pixelSize = _pixelSize;

#pragma mark -

#pragma mark Initialization and teardown

- (id)init;

{

if (!(self = [super initWithFragmentShaderFromString:kGPUImagePolarPixellatePosterizeFragmentShaderString]))

{

return nil;

}

pixelSizeUniform = [filterProgram uniformIndex:@"pixelSize"];

centerUniform = [filterProgram uniformIndex:@"center"];

self.pixelSize = CGSizeMake(0.05, 0.05);

self.center = CGPointMake(0.5, 0.5);

return self;

}

Initializing the class is just a matter of passing in our shader string, and then setting up any and all uniforms. The call to initWithFragmentShaderFromString: passes our shader into the appropriate methods to check and compile it so that it’s ready to run on the GPU. If we wanted to also supply vertex shader (different than the default) there’s also a call to do that.

We have to call [filterProgram uniformIndex:] for each uniform we supply in our class. This sets the handle in the shader to the supplied string and it set the GLint uniform pointer to the appropriate place so the data is passed in.

Finally, we set some default values in the init so that our filter will work, even without the filter’s user needing to supply some values.

The last thing we need to do is set up our getters and setters for the uniform values:

- (void)setPixelSize:(CGSize)pixelSize

{

_pixelSize = pixelSize;

[GPUImageOpenGLESContext useImageProcessingContext];

[filterProgram use];

GLfloat pixelS[2];

pixelS[0] = _pixelSize.width;

pixelS[1] = _pixelSize.height;

glUniform2fv(pixelSizeUniform, 1, pixelS);

}

- (void)setCenter:(CGPoint)newValue;

{

_center = newValue;

[GPUImageOpenGLESContext useImageProcessingContext];

[filterProgram use];

GLfloat centerPosition[2];

centerPosition[0] = _center.x;

centerPosition[1] = _center.y;

glUniform2fv(centerUniform, 1, centerPosition);

}

These two setters are very similar to each other, I’ll explain them as though they are the same.

Our setter first sets the class variable to the supplied value. It then calls [GPUImageOpenGLESContext useImageProcessingContext] and [filterProgram use], these two calls set the state of OpenGL so that the supplied data goes to the right program. Remember that the key to the framework is that the filters can be chained together. Those two calls make sure that we have the right shader program loaded when we pass in the data.

Next we set up a float array to pass into the call. The glUniform2fv is one of many similar calls that passes data of a certain type into our shader program. This call takes three arguments, the first is the pointer to the location where the data will live (allowing us to access it by its handle). The second is the count (how many we’re passing). You may think that it should be two because we are passing two floats, however, the call is glUniform2fv, instead of glUniform1fv, the call assumes that we are sending two floats worth of data, so if we passed in 2 for the middle parameter, it would expect two, two float long worth of data. The final parameter is the pointer to the data we are passing.

Structure of GPUImage

GPUImage has classes for inputs (GPUImageVideoCamera, GPUImageMovie, GPUImagePicture), Filters, and outputs (GPUImageView, GPUImageMovieWriter, GPUImageRawData). You chain inputs through one or more filters and then to the output. Each connection is made by adding the next link in the chain as a target to the current link. So, a series would look like, create a camera, filter, and view. Then add the filter as a target to the camera and add the view as a target to the filter. This creates a chain that pulls the framebuffer contents (the output of the OpenGL operations) through the chain to the final output.

One object can have multiple inputs and multiple outputs. Some filters have multiple inputImageTextures, indeed, there is a built in inputImageTexture2 handle that will be available if you set a filter as the target of multiple sources. For example, the GPUImageChromaKeyFilter would be set as the target for both a GPUImagePicture and GPUImageVideoCamera source. This filter replaces any green within a certain range in the video with the contents of the supplied picture.

You can send the output of one filter to several views, or to a view and to a movie writer or to another filter, which sends it to another view. All these structures have examples in the sample code showing you how it’s done. It’s a very flexible structure.

Setting up the View Controller Class

Now that we have a working video, we’ll set up a simple application to use it. Let’s go to the ViewController class that came with the view template we set up earlier. Change the following lines in the implementation file:

#import "JGViewController.h"

#import "GPUImage.h"

#import "GPUImagePolarPixellatePosterizeFilter.h"

@interface JGViewController () {

GPUImageVideoCamera *vc;

GPUImagePolarPixellatePosterizeFilter *ppf;

}

@end

@implementation JGViewController

- (void)viewDidLoad

{

[super viewDidLoad];

vc = [[GPUImageVideoCamera alloc] initWithSessionPreset:AVCaptureSessionPreset640x480 cameraPosition:AVCaptureDevicePositionFront ];

GPUImageRotationFilter *rf = [[GPUImageRotationFilter alloc] initWithRotation:kGPUImageRotateRight];

ppf = [[GPUImagePolarPixellatePosterizeFilter alloc] init];

[vc addTarget:rf];

[rf addTarget:ppf];

GPUImageView *v = [[GPUImageView alloc] init];

[ppf addTarget:v];

self.view = v;

[vc startCameraCapture];

// Do any additional setup after loading the view, typically from a nib.

}

-(void)touchesBegan:(NSSet *)touches withEvent:(UIEvent *)event {

CGPoint location = [[touches anyObject] locationInView:self.view];

CGSize pixelS = CGSizeMake(location.x / self.view.bounds.size.width * 0.5, location.y / self.view.bounds.size.height * 0.5);

[ppf setPixelSize:pixelS];

}

-(void)touchesMoved:(NSSet *)touches withEvent:(UIEvent *)event {

CGPoint location = [[touches anyObject] locationInView:self.view];

CGSize pixelS = CGSizeMake(location.x / self.view.bounds.size.width * 0.5, location.y / self.view.bounds.size.height * 0.5);

[ppf setPixelSize:pixelS];

}

We set up a couple of private instance variables, the GPUImageVideoCamera and the GPUImagePolarPixellatePosterizeFilter.

Then we create a chain of filters. We set up the video camera, with the appropriate resolution preset and device position. Then we create a rotation filter because the orientation of the video coming isn’t correct when we’re looking at the phone in portrait mode. We then chain the filters thusly, video camera – rotation filter – polarpixellateposterizefilter – and finally the GPUImageView that we’ll use to display the contents on the screen.

Finally, we set the view of the current view controller class to the GPUImageView. In order for this to work we’ll need to set up the view in the xib (storyboard actually). Go and do that now.

At this point we can run it and we’ll have a working live video filter. But, it might be fun to add some interactivity. The touchesmoved and touchesbegan methods use the location of the touch to set pixelSize uniform variable in our shader. The smallest pixels are acheived by a touch on the top left and the larges pixels by a touch on the bottom right. You can experiment with this filter to get very different results.

Congratulations, you’ve written your first shader.

Other Examples of Image Processing with Shaders

In order to give you some ideas of what else you might do, here are a handful of examples that perform different operations on an incoming image:

Reduce the red and green levels and increase the blue:

lowp vec4 color = sampler2D(inputImageTexture, textureCoordinate); lowp vec4 alter = vec4(0.1, 0.5, 1.5, 1.0); gl_FragColor = color * alter;

Reduce Brightness:

lowp vec4 textureColor = texture2D(inputImageTexture, textureCoordinate); gl_FragColor = vec4((textureColor.rgb + vec3(-0.5)), textureColor.w);

Blur an image:

mediump float texelWidthOffset = 0.01; mediump float texelHeightOffset = 0.01; vec2 firstOffset = vec2(1.5 * texelWidthOffset, 1.5 * texelHeightOffset); vec2 secondOffset = vec2(3.5 * texelWidthOffset, 3.5 * texelHeightOffset); mediump oneStepLeftTextureCoordinate = inputTextureCoordinate - firstOffset; mediump twoStepsLeftTextureCoordinate = inputTextureCoordinate - secondOffset; mediump oneStepRightTextureCoordinate = inputTextureCoordinate + firstOffset; mediump twoStepsRightTextureCoordinate = inputTextureCoordinate + secondOffset; mediump vec4 fragmentColor = texture2D(inputImageTexture, inputTextureCoordinate) * 0.2; fragmentColor += texture2D(inputImageTexture, oneStepLeftTextureCoordinate) * 0.2; fragmentColor += texture2D(inputImageTexture, oneStepRightTextureCoordinate) * 0.2; fragmentColor += texture2D(inputImageTexture, twoStepsLeftTextureCoordinate) * 0.2; fragmentColor += texture2D(inputImageTexture, twoStepsRightTextureCoordinate) * 0.2; gl_FragColor = fragmentColor;

You can take a look at the actual filters for a bunch more examples, including edge detection, fisheye distortion, and a ton of other cool things. You can get the sample project here.

Thanks for this tutorial. I have been using GPUImage for several weeks now in my Photo App and I’m going to write my own filter following your article.

GPUImage is designed to be easy to add filters into and people are already contributing their own efforts through Github.

Awsome tutorial! I visited because of your iDevBlogADay perlin article. Great timing too, as I had just finished making a halfway decent halftone filter with CoreGraphics and pixelbuffers and wanted to go deeper on filters but have never touched OpenGL before.

Some parts are already outdated, 😉

GPUImageRotationFilter was deprecated: https://github.com/BradLarson/GPUImage/issues/256

Thanks you when you share the tutorial

Awesome Trial Also! However I noticed that GPUImage will crash without putting the OpenGL update on runAsynchronouslyOnVideoProcessingQueue.

runAsynchronouslyOnVideoProcessingQueue(^{

[GPUImageOpenGLESContext useImageProcessingContext];

[filterProgram use];

GLfloat centerPosition[2];

centerPosition[0] = _center.x;

centerPosition[1] = _center.y;

glUniform2fv(centerUniform, 1, centerPosition);

});

Thanks! Your tutorial ‘s very helpful. Can i use GPUImage framework to detect a array similar-colors from 1 image when user touch on mageview?

Nice Article. Here’s a Camera App I wrote using GPUImage.

Whoops, here the link the the Zazado camera app

Great tutorial, thanks!

I’ve build an iOS app that has a Cocos2d V3 view controller and am now trying to add a second view controller that has a GPUImage child but am getting OpenGL errors. I suspect that there’s a common resource that is getting accessed by the 2 frameworks.

Have you ever integrated GPUImage and Cocos2d into a single app?

Thanks!

I haven’t tried to use both projects in the same app. I have heard other’s try and have problems like what you are describing. I can’t give detailed advice on how to resolve the issue, but I suspect it would require code to use a separate OpenGL context every time either framework tries to alter the OpenGL state. GPUImage seems to be pretty responsible about setting the gpu context, but I don’t know if Cocos2d is as vigilant.

I’m getting a ‘Use of undeclared identifier’ error for the two

[GPUImageOpenGLESContext useImageProcessingContext]; calls…